News

-

[February 2025] Ivona Najdenkoska's paper (TULIP: Token-length upgraded CLIP) has been accepted at the ICLR 2025 conference.

For old news, please check this archived news page.

Team

Alumni

Paticipation in ICAI Labs

MultiX participates in the following labs of the Innovation Center for Artificial Intelligence:

-

The AIM Lab (AI for Medical Imaging) is a collaborative initiative of the Inception Institute of Artificial Intelligence from the United Arab Emirates and the University of Amsterdam. It focuses on using artificial intelligence for medical image recognition.

-

The National Police Lab AI is a collaborative initiative of the Dutch Police, Utrecht University, University of Amsterdam, and TU Delft. They aim to develop state-of-the-art AI techniques to improve safety in the Netherlands in a socially, legally, and ethically responsible way.

-

The AI4Forensics Lab is a collaborative initiative of the Netherlands Forensic Institute and the University of Amsterdam. Our focus is on the study and development of artificial intelligence for forensic purposes.

List of Funded Grants

AI4Intelligence: from Multimodal Data to Trustworthy Evidence in Court

- Primarily funded by NWO and co-funded by others (€1.5M), 09/2022 - 09/2028

- Law enforcement has to process huge amounts of data derived from online platforms, digital marketplaces, or communication services, where AI can serve as a solution. In AI4Intelligence we allow AI tool development, the use of these tools by investigators, and legal regulations to go hand in hand so that investigations can lead to trustworthy evidence that is admissible in court.

- Partners: Nationale Politie, NFI, Sustainable Rescue Foundation, TNO, Microsoft, SynerScope BV, CFLW Cyber Strategies, DuckDuck0Goose, ZiuZ Forensics BV, BG.legal, The Hague Court of Appeal, Innovation Team Testlab OM

- Link to the News

AI4FILM

- Funded by ClickNL (€387K), 09/2022 - 09/2026

- With this project, we aim to develop novel AI techniques that are tailored to film by learning from analysis, production practice, and theory.

- Partners: Kaspar

VisXP: Interactive Visual Exploration of Media Archives

- Funded by ClickNL (€391K), 02/2021 - 12/2023

- This project aims to make AI technology widely applicable within media archives based on interactive learning interfaces that enable users to explore the data visually. This requires research into combining the different types of data sources within archives, both for analysis and for displaying and visualizing with an interface.

- Partners: The Netherlands Institute for Sound & Vision, RTL Nederland

- Project Link

List of Demos

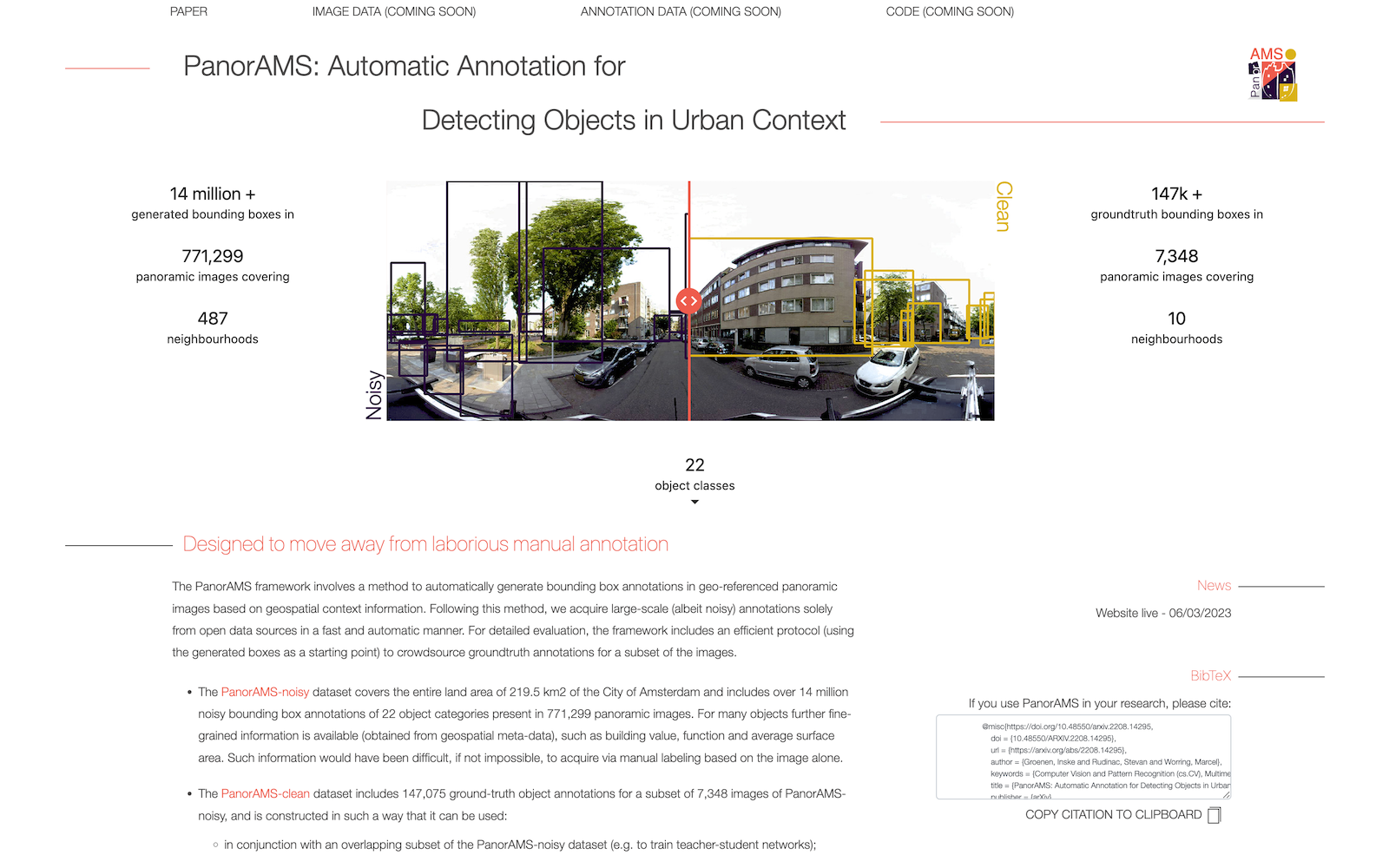

PanorAMS: Automatic Annotation for Detecting Objects in Urban Context

The PanorAMS framework involves a method to automatically generate bounding box annotations in geo-referenced panoramic images based on geospatial context information.

We acquire large-scale (albeit noisy) annotations from open data sources.

(website link, paper link)

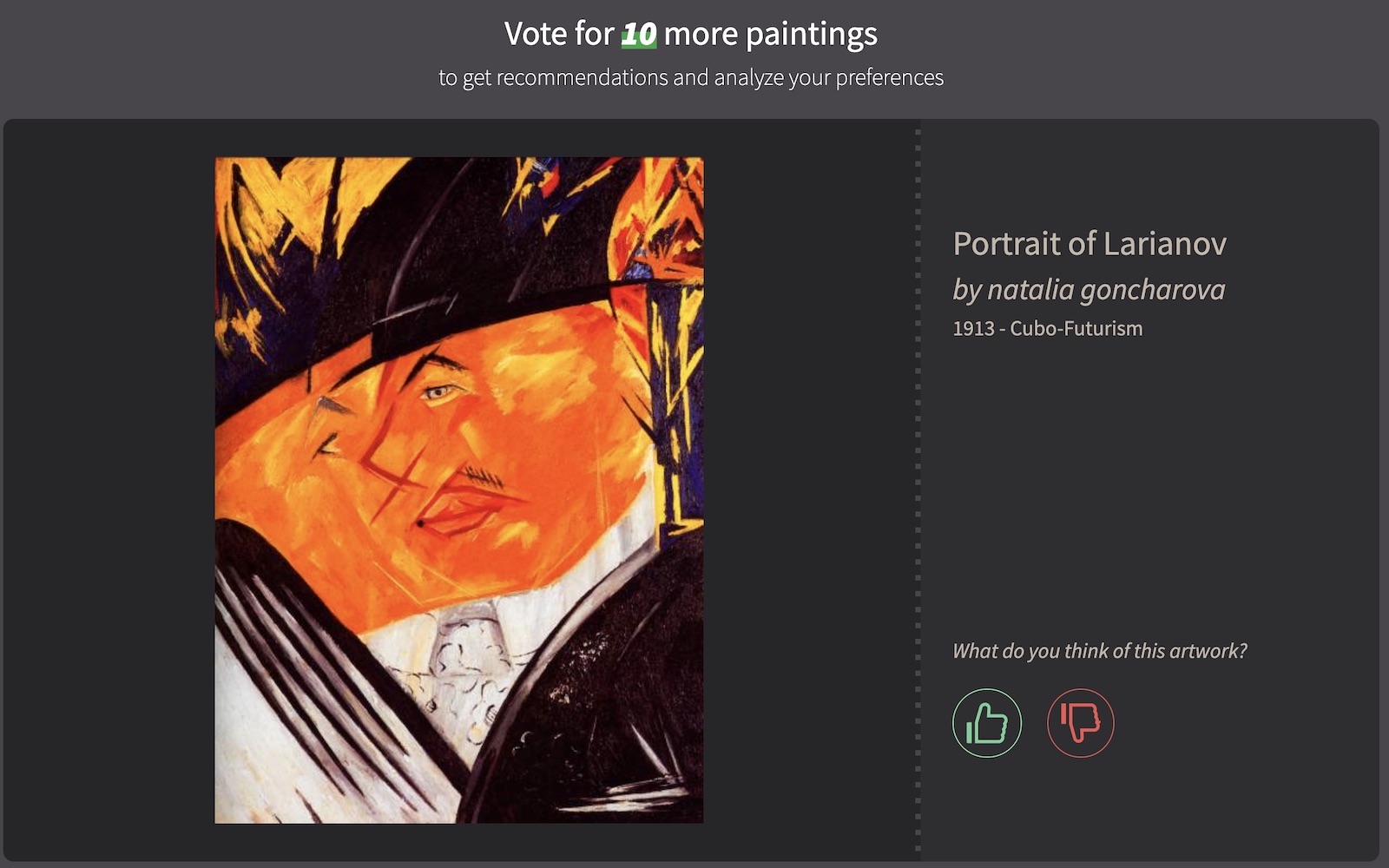

TindART: A Personal Visual Arts Recommender

TindART is a web-based visual artwork reccomendation system.

The system has visual analytics controls that allow users to gain a deeper understanding of their art taste and refine their personal recommendation.

(website link, paper link)

OmniArt: A Large-scale Artistic Benchmark

OmniArt is a large scale artistic benchmark dataset aggregated from multiple collections around the world.

It is designed for easy data handling and fast integration with popular deep learning frameworks.

(website link, paper link)

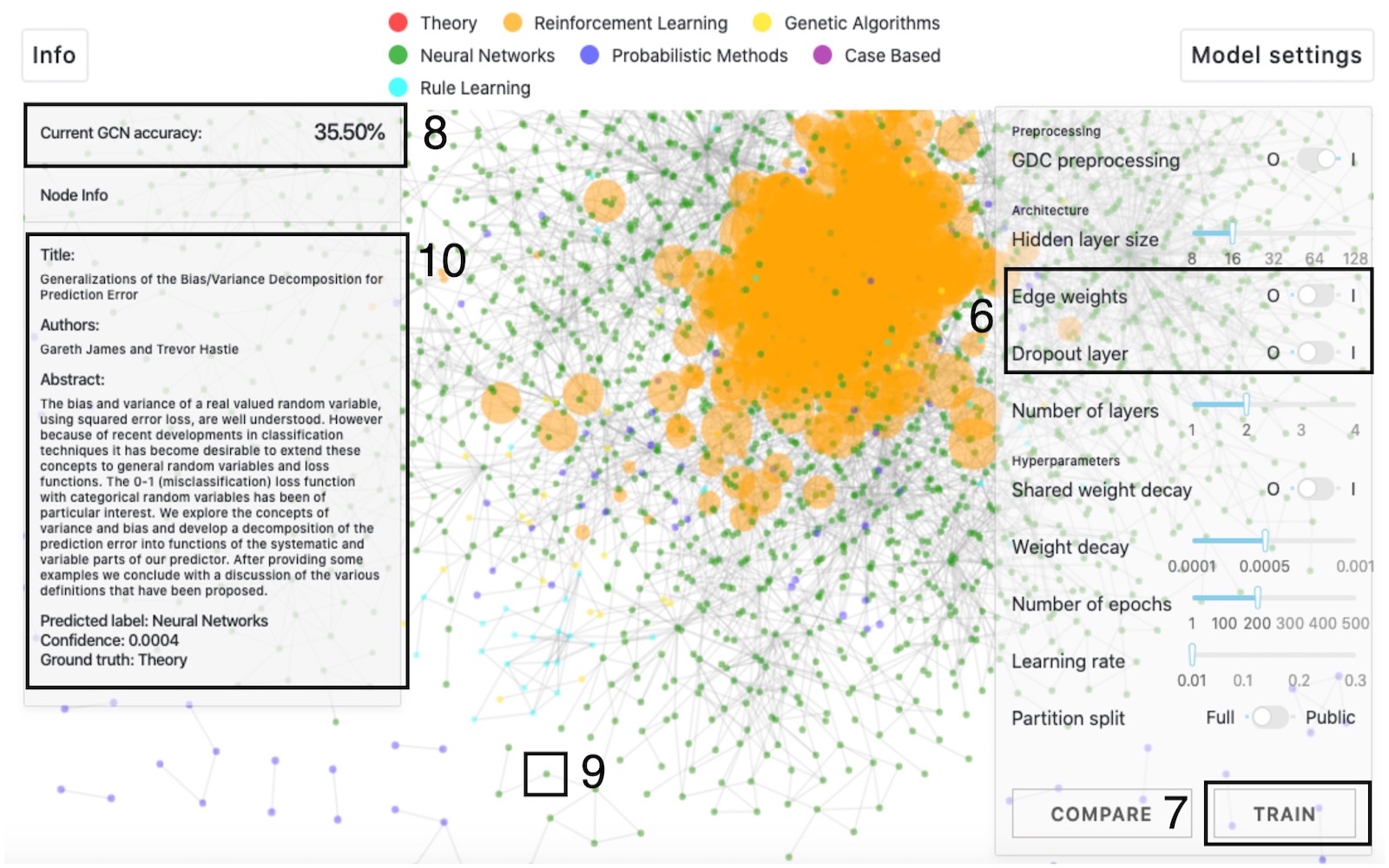

GCNIllustrator: Illustrating the Effect of Hyperparameters on Graph Convolutional Networks

GCNIllustrator is a visual analytics tool for illustrating the effect of hyperparameters on graph convolutional networks (GCNs).

It addresses one of the most tedious steps in training GCNs: the choice of hyperparameters and their influence on performance.

(video demo and paper link)

List of Open Source Courses

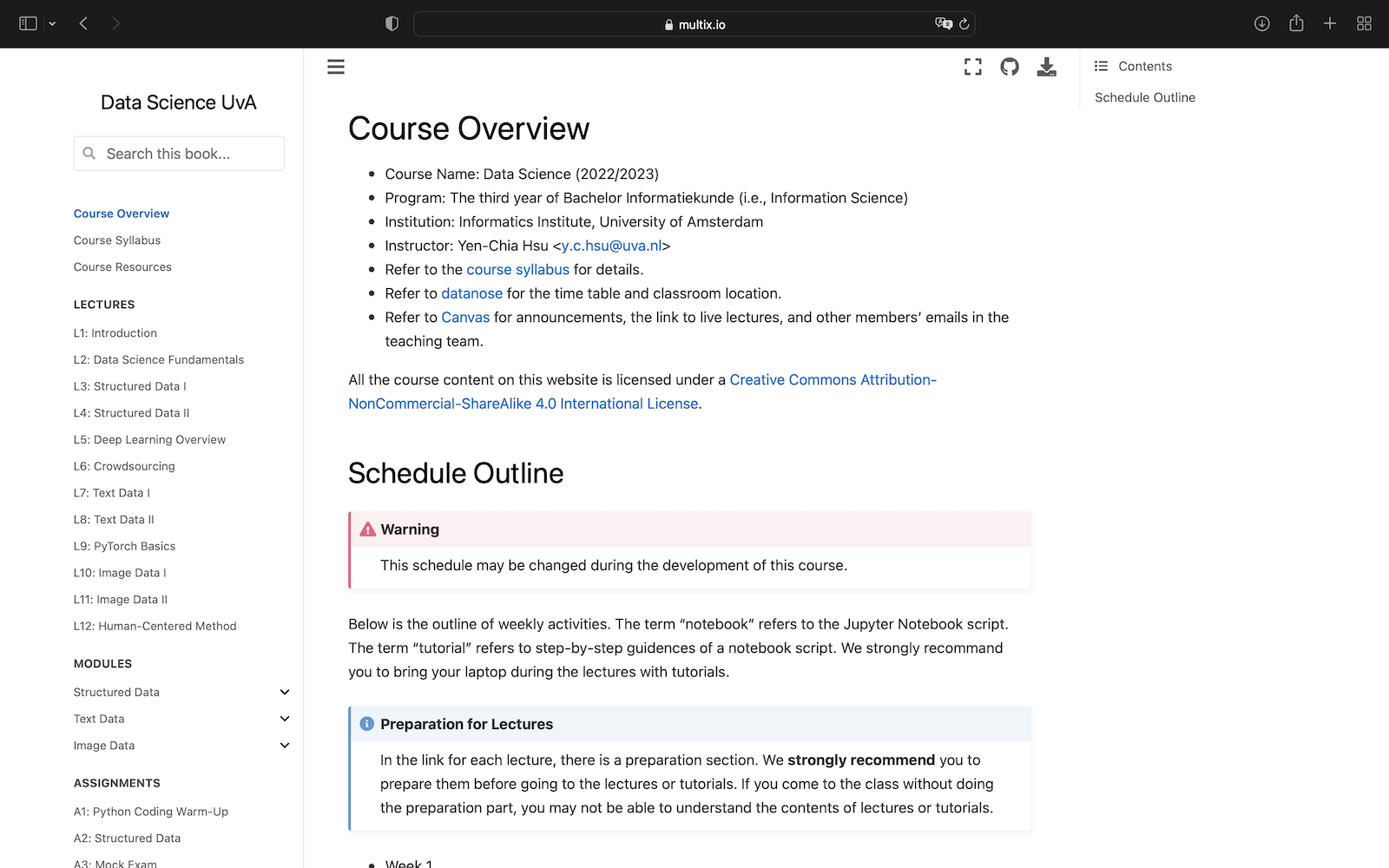

Data Science (Third Year of the UvA Bachelor Informatiekunde Program)

This course teaches data science pipelines with three modules in processing structured data, text, and images.

Course materials involve Jupyter Notebooks for hands-on experiences and lectures that explain theories behind the implementations.

(website link)